What are the three most critical dangers implicit to AI, and what is being done to prevent them? #

Economic insecurity: AI is introducing economic precarity all around the planet. I don’t want to be apocalyptic, but to contextualize this historically, every wave of industrial automation and so-called technological innovation brought with it a number of pains. That is why the economist John Maynard Keynes predicted that technology of the early 20th century would result in a 15-hour work week. Of course, that did not happen. What we’re really creating now is a bimodal distribution, meaning there are tons of people who are left out, and a very small percentage of people making an astronomical amount of money. And that’s troubling because none of these AI systems would be worth a penny without our data. This isn’t about blaming tech. It’s recognizing that technology without the appropriate planning, and consultation and collaboration is going to create more and more insecurity and anxiety. Currently, we’re seeing very unhealthy economic indicators, like stock markets spiking while GDP is dropping. This shows us that basic GDP is now no longer tied to advancements in tech wealth. We need forward-thinking collaborations between people across disciplines – policymakers, economists, social scientists – so we can build systems around tech that can benefit all of its stakeholders.

“Intelligence”: What happens when we reduce intelligence to correlation and numbers? What in life doesn’t lend itself to being expressed through numbers? AI systems basically pose as intelligence but are really pattern recognition systems. I think this is a big problem, because patterns of the past might not be appropriate for the present or future. Whose past are we considering? We need to be thinking about where these technologies should not be applied, and relatedly, the lack of transparency around how these systems work, what data they’re using to formulate these patterns.

Environmental issues: We are seeing a greater onset of climate-related refugees and extreme weather events. Data centers have a huge carbon footprint, equal to that of New York City last year, because they require a lot of energy to operate. Their use of clean water could be in the range of the annual consumption of bottled water. There is more and more concern that even as we develop so-called “innovations and enhancements,” they’re not helping the collective us. The real material impacts of AI can be felt and gleaned right now.

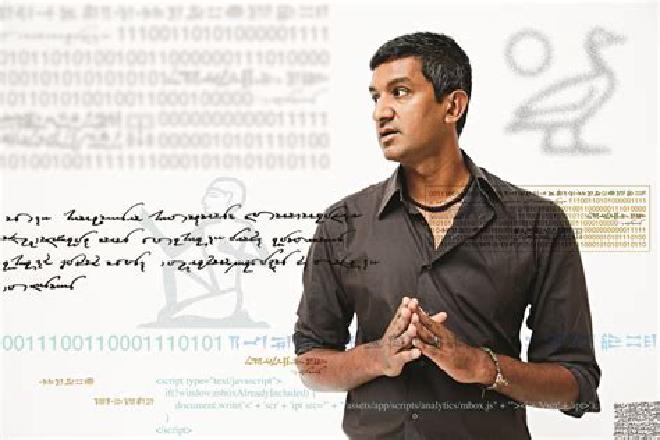

Ramesh Srinivasan

Professor of Information Studies at the Department of Education & Information Studies

University of California, Los Angeles

Citation #

This article is republished from the The Conversation’s Global original article where professor Ramesh Srinivasan was consulted about IA impacts.

Contact [Notaspampeanas](mailto: notaspampeanas@gmail.com)